A10-5800K vs. Core i3-3220 CPU Review

Introduction

Contents

This week AMD released its latest generation of CPUs with integrated video, dubbed “Trinity.” The A10-5800K is currently the most high-end model available, running at 3.8 GHz and costing USD 130. Let’s see how it fares against its main competitor, the Core i3-3220 (3.3 GHz, USD 130). We also included in our comparison the AMD APU that used to be the most high-end model, the A8-3870K (3 GHz, USD 110 currently, USD 145 at its release) and what was then its main competitor, the Core i3-2105 (3.1 GHz, USD 135).

It is interesting to see AMD starting to use the same letter as Intel to indicate its CPUs with an unlocked clock multiplier, “K.”

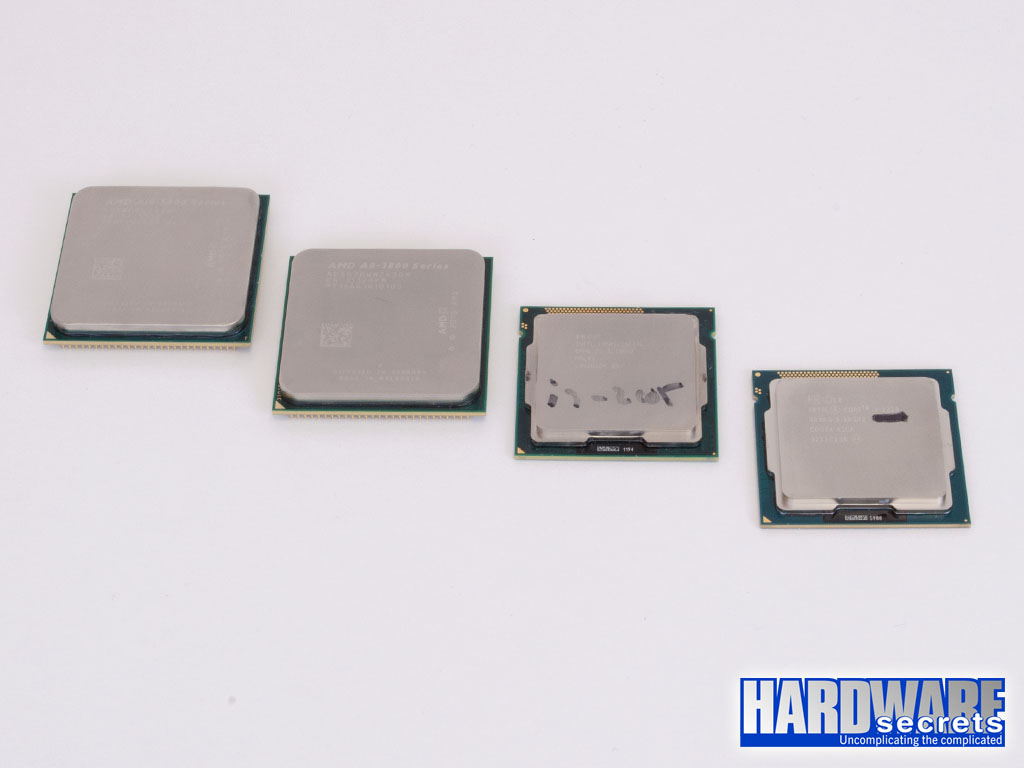

This new generation of AMD APUs, dubbed “Trinity,” uses a new CPU socket, called FM2, which is incompatible with the FM1 socket used by the previous generation of APUs (codenamed “llano”). They are physically different, so it is not possible to install an FM1 CPU into an FM2 socket or an FM2 CPU into an FM1 socket. The two Core i3 CPUs included in our review use the LGA1155 socket. In Figures 1 and 2, you can compare the physical aspect of the four CPUs included in our review.

Figure 1: The A10-5800K, the A8-3870K, the Core i3-2105, and the Core i3-3220

Figure 1: The A10-5800K, the A8-3870K, the Core i3-2105, and the Core i3-3220

Figure 2: The A10-5800K, the A8-3870K, the Core i3-2105, and the Core i3-3220

Figure 2: The A10-5800K, the A8-3870K, the Core i3-2105, and the Core i3-3220